Build Cost Efficient

AI Workloads with EGS

AI Workloads with EGS

Combining GPU Power with Avesha's Kubernetes Expertise for an Unmatched GPU Provisioning Platform

Modern AI workflows require seamless collaboration between CPUs and GPUs. Avesha orchestrates the perfect balance between compute resources, ensuring your training, inference, and real-time applications run with maximum efficiency. By intelligently managing workloads across CPU and GPU infrastructures, we unlock the full potential of hybrid compute environments.

Modern AI workflows require seamless collaboration between CPUs and GPUs. Avesha orchestrates the perfect balance between compute resources, ensuring your training, inference, and real-time applications run with maximum efficiency. By intelligently managing workloads across CPU and GPU infrastructures, we unlock the full potential of hybrid compute environments.

Modern AI workflows require seamless collaboration between CPUs and GPUs. Avesha orchestrates the perfect balance between compute resources, ensuring your training, inference, and real-time applications run with maximum efficiency. By intelligently managing workloads across CPU and GPU infrastructures, we unlock the full potential of hybrid compute environments.

Built for real-world AI operations

VIEW PLATFORM CAPABILITIESReliability

🡢

Load balancing across clusters/regions

🡢

Automated failover for capacity interruptions

🡢

Multi-cluster routing controls for low latency

🡢

High availability patterns for inference services

Efficiency

🡢

Load balancing across clusters/regions

🡢

Automated failover for capacity interruptions

🡢

Multi-cluster routing controls for low latency

🡢

High availability patterns for inference services

Control & Governance

🡢

Load balancing across clusters/regions

🡢

Automated failover for capacity interruptions

🡢

Multi-cluster routing controls for low latency

🡢

High availability patterns for inference services

Who we empower

Neocloud providers

Empower the Next Wave of Cloud Innovation. Avesha’s intelligent orchestration unifies GPU and CPU operations—so you can build, scale, and deliver next-gen cloud services effortlessly.

Offer GPU-as-a-Service with precision scheduling and dynamic allocation—maximizing utilization while cutting infrastructure overhead.

Expand compute capacity on demand—auto-scale seamlessly to handle customer surges without latency or resource waste.

Deliver secure, efficient multi-tenant environments with built-in boundaries that protect performance and data integrity.

Enterprise AI

Supercharge your R&D teams with infrastructure built for speed, scale, and intelligence. Avesha delivers the performance backbone modern AI demands.

Distribute compute-intensive workloads intelligently across GPUs and CPUs—cut training time, boost iteration speed, and get to results faster.

Run AI pipelines effortlessly across hybrid and multi-cloud environments—no bottlenecks, no rewrites, just continuous throughput.

Deliver real-time, low-latency predictions that keep pace with production workloads—powering responsive, high-performance AI experiences.

Purpose-built for data scientists,

hybrid AI teams, and platform engineers.

VIEW COMPARISONhybrid AI teams, and platform engineers.

STEP

45%

Increase

In node allocations

STEP

30%

Reduction

capacity + locality aware

STEP

47%

Reduction

In GPU Cost

Key Capabilities

Elastic Resource Allocation

Automatically rebalance GPU and CPU capacity in real time to meet dynamic workload demands—idle slots are reclaimed, tasks finish faster, and costs shrink.

Spot GPU Harnessing

Tap into discounted spot-instance GPUs for non-critical or batch AI jobs—keeping performance high while lowering compute spend.

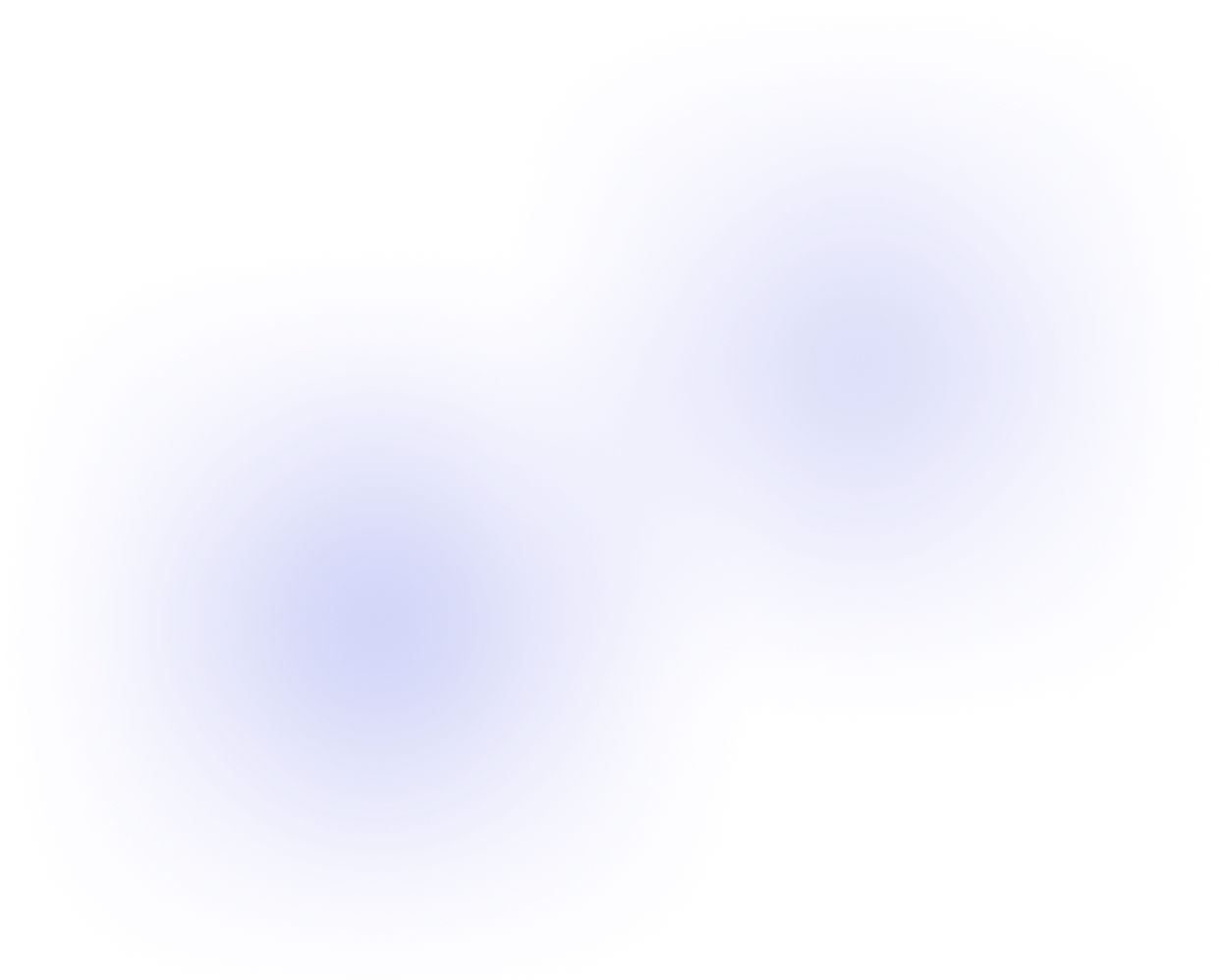

Live-Pulse Observability

Gain a clear, continuous view into your GPU infrastructure with live alerts and predictive anomaly detection—so issues are caught before they impact ML pipelines.

Smart Cost Engineering

Forecast and shape your GPU spend proactively—set priority tiers, control access, and fine-tune resource mapping to balance fairness and budget.

Workflow-Aware Orchestration

Let your AI workflows flow seamlessly across clusters and clouds—EGS understands DAGs, task dependencies, and pipeline rhythm so you don’t have to sweat scheduling.

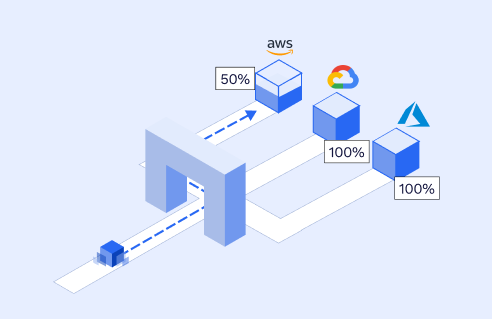

True Multi-Cloud Independence

Run your AI workloads wherever they belong—on-prem, public, or hybrid—while maintaining tenant isolation, data sovereignty, and operational consistency.

Autonomous GPU Operations

From idle-to-active in seconds—EGS automates deployment, re-assignment, scaling, and remediation of your GPU stack so engineers focus on models, not infrastructure.

Tenant-Aware Platform Governance

Enable multiple teams or business units to share GPU infrastructure safely—assign roles, priorities, and quotas per namespace while maintaining rock-solid isolation.

How EGS Benefits you

Elastic Resource Allocation

Automatically rebalance GPU and CPU capacity in real time to meet dynamic workload demands—idle slots are reclaimed, tasks finish faster, and costs shrink.

Spot GPU Harnessing

Tap into discounted spot-instance GPUs for non-critical or batch AI jobs—keeping performance high while lowering compute spend.

Live-Pulse Observability

Gain a clear, continuous view into your GPU infrastructure with live alerts and predictive anomaly detection—so issues are caught before they impact ML pipelines.

Smart Cost Engineering

Forecast and shape your GPU spend proactively—set priority tiers, control access, and fine-tune resource mapping to balance fairness and budget.

Workflow-Aware Orchestration

Let your AI workflows flow seamlessly across clusters and clouds—EGS understands DAGs, task dependencies, and pipeline rhythm so you don’t have to sweat scheduling.

True Multi-Cloud Independence

Run your AI workloads wherever they belong—on-prem, public, or hybrid—while maintaining tenant isolation, data sovereignty, and operational consistency.

Autonomous GPU Operations

From idle-to-active in seconds—EGS automates deployment, re-assignment, scaling, and remediation of your GPU stack so engineers focus on models, not infrastructure.

Tenant-Aware Platform Governance

Enable multiple teams or business units to share GPU infrastructure safely—assign roles, priorities, and quotas per namespace while maintaining rock-solid isolation.

Power the Future of Hybrid AI with EGS

If you can relate to the problems we solve and are interested in our products

Copyright © Avesha 2026. All rights reserved.